Introduction

Your inbox contains some of the most sensitive data you generate: salary negotiations, client details, health conversations, legal correspondence. AI email tools now process all of it. According to Gartner, more than 80% of enterprises will have adopted generative AI by 2026, up from less than 5% in 2023.

For professionals and teams already using AI email tools, this creates a real GDPR exposure — one most users don't recognise until it's too late.

Unlike traditional email compliance (consent before sending, easy unsubscribe, no spam), AI email personalization operates on the content of emails already in your inbox—reading language, mapping relationships, and inferring intent to draft replies, prioritise messages, and pull out tasks. This shifts the compliance question from "did you consent to receive this email?" to "who is allowed to read and process what's inside it?"

The answer matters. Most AI email tools were not built with GDPR in mind — and the gap between what they do and what EU law permits is wider than their privacy pages suggest. This guide breaks down exactly where the risks sit and how to address them.

TLDR

- AI email tools process personal data and are fully subject to GDPR, regardless of vendor location

- Prioritize tools with Zero Data Retention agreements, ephemeral processing, and signed Data Processing Agreements (DPAs)

- The biggest risks are unauthorized data sharing with AI providers, excessive retention, and lack of user control

- Architecture matters more than policy language: ephemeral processing is structurally safer than persistent storage

- Not all AI email tools are equal—verify certifications, sub-processor agreements, and data residency before adopting

Why AI Email Personalization Creates New GDPR Challenges

Traditional email compliance focused on bulk marketing emails: consent before sending, easy unsubscribe, no spam. AI email personalization works differently. It operates on the content of emails already in your inbox—analyzing language, context, relationships, and behavior to draft replies, prioritize messages, and extract tasks. That shifts the compliance question from "did you consent to receive this email?" to "who can read and process what's inside your inbox?"

The Data Volume Problem

AI personalization engines don't just read one email—they often need access to email history, contact patterns, and writing style across dozens or hundreds of threads to function effectively. Under GDPR, each of those data points is personal data, and processing them requires a lawful basis.

When an AI tool analyzes your inbox to learn your communication style, it's processing:

- Metadata: sender, recipient, timestamps

- Message content: subject lines, body text, attachments

- Behavioral patterns: response times, preferred phrasing, relationship context

All of this constitutes personal data under GDPR Article 4(1).

The Third-Party AI Provider Risk

Most AI email tools route email content through large language model APIs (e.g., OpenAI, Anthropic, Google). When this happens, your email data is technically being shared with a third-party data processor. According to the European Data Protection Board's 2025 guidance on LLMs, when providers collect or retain data for their own purposes—such as model fine-tuning or feature improvement—they assume the role of controller too. This dual-controller situation puts Article 28 compliance squarely on your organization, even if the AI provider caused the violation.

Without proper contractual safeguards, routing professional communications through third-party APIs exposes organizations to significant compliance risk. If the AI provider retains email content for model training or lacks adequate data protection agreements, the deploying organization may be held liable for unauthorized data processing.

The EU AI Act Adds Another Layer

The EU AI Act (Regulation 2024/1689), which entered into force in 2024, introduces new statutory obligations. Article 50 requires transparency: AI systems intended to interact directly with natural persons must be designed so users are informed they are interacting with an AI system. Additionally, AI systems used in employment and workers' management—such as recruitment or task allocation based on individual behavior—are classified as high-risk under Annex III, triggering strict record-keeping requirements under Article 12.

For most organizations, this means AI email tools are no longer just a productivity decision—they carry legal accountability that needs to be built into procurement and deployment from day one.

The GDPR Rules That Govern AI Email Processing

Lawful Basis and Consent

Under GDPR Article 6, any processing of personal data requires a lawful basis. For AI email personalization, the most relevant bases are consent and legitimate interest—but legitimate interest requires a documented balancing test and cannot override the fundamental rights of the data subject.

Consent (Article 6(1)(a)):

EDPB Guidelines 05/2020 state that consent bundled into Terms of Service is "presumed not to have been freely given." Valid consent must be freely given, specific, informed, and unambiguous. A buried clause in a tool's Terms of Service is not valid consent under GDPR. In employment contexts, an "imbalance of power" means employees cannot freely deny consent without fear of detrimental effects, rendering workplace consent invalid for AI tools.

Legitimate Interest (Article 6(1)(f)):

When relying on legitimate interest, organizations must conduct a three-step test:

- Pursue a legitimate interest

- Demonstrate the processing is necessary to achieve it

- Confirm the data subject's rights do not override that interest

This requires a documented Legitimate Interest Assessment (LIA) completed before processing begins.

Data Minimization and Purpose Limitation

GDPR Article 5(1)(c) requires data to be "adequate, relevant and limited to what is necessary" (Data Minimization), while Article 5(1)(e) mandates data be kept "for no longer than is necessary" (Storage Limitation).

Data Minimization in Practice:

AI tools should only process email data strictly necessary for their stated function. An AI that reads every message in a mailbox to "improve its models" beyond the user's stated purpose likely violates this principle.

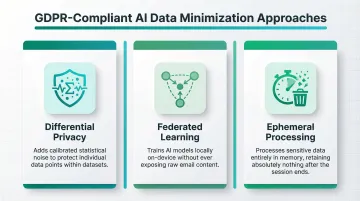

The CNIL's 2026 AI recommendations state that data annotation must be limited to what is "necessary and relevant for training the model." Compliant approaches include:

- Differential privacy — adds statistical noise to protect individual data points

- Federated learning — trains models locally without exposing raw email content

- Ephemeral processing — processes data in memory and retains nothing after the session

Storage Limitation:

Personal data must not be kept longer than necessary. Tools that store email content persistently create a direct compliance liability; tools that process ephemerally and retain nothing avoid it entirely.

The CNIL requires organizations to set strict retention periods for training data. Retention for bias auditing may be justified, but data should be deleted once it's no longer needed for active development tasks.

Automated Decision-Making (Article 22)

These storage obligations feed into a broader concern: what happens when AI doesn't just read your email—but acts on it without you. GDPR Article 22 gives individuals the right not to be subject to decisions based "solely on automated processing" that produce legal effects or similarly significant impacts. In email, this applies to AI tools that automatically filter, block, or deprioritize messages without human review.

The ICO clarifies that "solely automated" means a process "totally automated and excludes any human influence on the outcome." A human who simply applies the system's output without exercising independent judgment is still considered solely automated. E-recruiting pipelines that operate without human intervention constitute a significant effect under WP29/EDPB Guidelines and Recital 71.

The Biggest Compliance Risks in AI Email Personalization

Risk 1: Unauthorized Data Sharing with AI Model Providers

When an AI email tool sends email content to a third-party LLM API, that API provider becomes a sub-processor under GDPR. Under GDPR Article 28(3), processing must be governed by a contract stipulating that the processor acts "only on documented instructions from the controller." Article 28(2) prohibits engaging another processor without prior written authorization.

If the vendor has no Zero Data Retention agreement in place, the AI provider may retain, log, or use that data for model training. Regulators require technical proof over marketing promises—not vendor assurances.

EDPB Opinion 28/2024 states that Supervisory Authorities must evaluate documentation whenever a claim of anonymity or non-retention is made. If a SA cannot confirm that "effective measures were taken to anonymise the AI model, the SA would be in a position to consider that the controller has failed to meet its accountability obligations."

What to ask vendors:

- Do you have a signed Data Processing Agreement with each AI provider?

- Are Zero Data Retention agreements in place with sub-processors?

- What contractual prohibitions prevent AI providers from using email data for model training?

Risk 2: Lack of Transparency About How AI Uses Email Data

GDPR requires that users be clearly informed about how their data is processed (Articles 13-14). Many AI email tools bury AI processing details in privacy policies rather than surfacing them at the point of use. This opacity is a compliance risk—particularly if AI is making decisions (like email prioritization) that affect users without their awareness.

EU AI Act Article 50 adds another layer: AI systems interacting with natural persons must be designed so users know they are interacting with AI. Generic privacy policies fall short if they don't address:

- Which AI models process email content

- How long data is retained after processing

- What automated decisions are made (and on what basis)

Risk 3: Over-Retention of Email Content for Personalization Training

Some AI email tools improve over time by retaining processed email content to refine their models. This creates a data retention problem: GDPR requires personal data to be deleted when it's no longer needed for the original purpose.

Supervisory authorities actively penalize unlawful data retention for AI. Clearview AI faced multi-million Euro fines from the UK ICO, French CNIL, and Dutch AP for unlawfully scraping and retaining biometric data without a valid lawful basis or transparency. The violations spanned Articles 5, 6, and 9.

Risk 4: Cross-Border Data Transfers

AI email tools based outside the EU or using cloud infrastructure in non-adequate countries (e.g., US-based LLM APIs) must comply with GDPR Chapter V (Articles 44-50) on international data transfers. Without Standard Contractual Clauses (SCCs) or equivalent protections in place, routing email content through these systems is a violation.

The European Commission issued modernized Standard Contractual Clauses in 2021. Following the Schrems II judgment, EDPB Recommendations 01/2020 require Transfer Impact Assessments (TIAs) to verify whether third-country laws undermine SCCs' effectiveness—and may require supplementary technical or contractual safeguards. Switzerland's adequacy status was confirmed in a Commission report dated January 15, 2024.

How to Stay GDPR Compliant When Using AI for Email

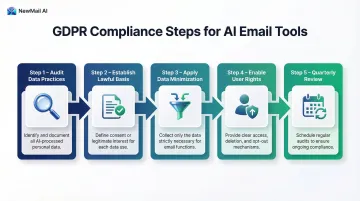

Step 1: Audit Your Current AI Email Tool's Data Practices

Before anything else, request the vendor's Data Processing Agreement (DPA) and review it thoroughly.

Key questions to document:

- Does the tool store email content? For how long?

- Which AI providers does it use as sub-processors?

- Do those providers have Zero Data Retention agreements?

- Is data processed in the EU or in an adequate country?

- Are Standard Contractual Clauses in place for non-EU transfers?

Document the answers as part of your records of processing activities (ROPA) under Article 30 — this becomes your baseline for everything that follows.

Step 2: Establish a Clear Lawful Basis and Document It

Decide whether you're relying on consent or legitimate interest, document the reasoning, and make sure your privacy notice accurately reflects how the AI email tool processes data.

If relying on legitimate interest, complete a Legitimate Interest Assessment (LIA) that:

- Identifies the legitimate interest pursued

- Demonstrates the necessity of processing email data

- Balances your interests against the fundamental rights of data subjects

- Documents safeguards to protect those rights

Step 3: Apply Data Minimization in Tool Configuration

Configure AI email tools to access only the data they need. Limit mailbox access to relevant folders or time periods where possible. Disable any features that involve storing email content for model improvement unless you have explicit consent and a clear retention policy.

Ask vendors:

- Can I restrict access to specific folders or date ranges?

- Can I disable features that retain email content?

- What data is strictly necessary for core functionality?

Step 4: Enable User Rights and Opt-Out Mechanisms

Configuring the tool correctly is only half the picture. Anyone whose data flows through your AI email tool — including external contacts, not just the account holder — holds GDPR rights: access, erasure, and objection. In team environments, this extends to every third party mentioned in processed communications.

Establish clear procedures for:

- Responding to data subject access requests

- Processing erasure requests within 30 days

- Handling objection requests and pausing processing

- Notifying third parties when their data is processed

Step 5: Review and Update on a Regular Cadence

When a tool adds new AI features, brings on new sub-processors, or changes its retention practices, your overall GDPR standing changes with it — often without a direct notification to you.

Build a quarterly review into your workflow:

- Re-read the vendor's DPA and privacy policy when major product updates are released

- Verify that sub-processor lists remain current

- Confirm data retention practices haven't changed

- Update your ROPA documentation accordingly

What to Look for in a GDPR-Compliant AI Email Tool

Architecture Over Policy: Ephemeral vs. Persistent Processing

The architecture distinction that matters most: tools that process email ephemerally (reading content temporarily to perform a task, then discarding it) carry lower risk by design than tools that store email content persistently.

Look for vendors that explicitly state zero email storage by default and can demonstrate this with technical documentation—not just a policy statement. GDPR Article 25 requires implementing technical and organizational measures "such as pseudonymisation, which are designed to implement data-protection principles, such as data minimisation." EDPB Guidelines 4/2019 state that controllers must account for the "state of the art" continuously.

For AI specifically, the CNIL recommends "ensuring timely anonymization of collected data or, if not feasible, applying pseudonymization," along with "implementing measures to reduce the risk of model memorization."

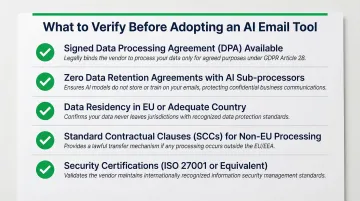

Key Certifications and Agreements to Verify

Before committing to any AI email tool, verify the following:

- Signed Data Processing Agreement (DPA) available upon request

- Zero Data Retention agreements with any AI sub-processors (such as Anthropic or Mistral)

- Data residency in the EU or an adequate country

- Standard Contractual Clauses for non-EU processing

- Security certifications such as Google Security Certified or ISO 27001

What Privacy-by-Design Looks Like in Practice

NewMail AI was built from the ground up with ephemeral processing, zero email storage by default, Zero Data Retention agreements with its AI providers (Anthropic and Mistral), and Swiss-based infrastructure.

Emails pass through the system temporarily for analysis, then are immediately deleted. The only data retained is encrypted context that users can view, edit, or delete at any time — so compliance is built into how the product works, not just how it's described.

Red Flags That Indicate Non-Compliance

Be wary of tools that exhibit these warning signs:

- No DPA available or provided only after extensive negotiation

- Vague language about "data may be used to improve our services"

- AI providers not disclosed or listed generically ("third-party AI services")

- Data processed on US servers without Standard Contractual Clauses

- No clear deletion mechanism for user data

- ISO 27001 or security certifications presented as proof of GDPR compliance (they demonstrate security maturity but don't guarantee GDPR compliance)

- Marketing claims of "zero retention" without technical documentation

Frequently Asked Questions

How to make emails GDPR compliant?

Obtain explicit consent before sending marketing emails, include a clear unsubscribe mechanism in every message, and collect only data necessary for the stated purpose. If AI tools are used to draft or manage those emails, verify the vendor has a Data Processing Agreement (DPA), processes data lawfully, and respects data minimization principles.

Is using AI GDPR compliant?

AI is not inherently non-compliant, but tools must process personal data with a valid legal basis, data minimization, and proper sub-processor agreements. The compliance burden falls on your organization—you remain the data controller and must conduct due diligence on any AI tool handling personal data.

Does GDPR apply to personal emails?

GDPR's household exemption (Recital 18) excludes purely personal emails from its scope. However, when a commercial AI tool processes those emails, the vendor becomes a data processor and GDPR applies to that relationship—the exemption does not extend to AI services analyzing email content, even if the emails themselves were personal.

Do emails get flagged for AI?

Many email providers and AI tools analyze content for spam filtering, prioritization, or personalization. Under GDPR, users must be informed when AI processes their emails, and a lawful basis—consent or legitimate interest—must exist for that processing. Security-related analysis (malware, phishing detection) is generally permitted, but must be proportionate.

What data do AI email tools collect, and how does GDPR apply?

AI email tools typically process content, metadata (sender, recipient, timestamps), and behavioral data—all personal data under GDPR. Each processing activity requires a lawful basis, retention limits, and transparency. Tools that retain email content for model training carry significantly higher compliance risk than those that process data ephemerally and store only encrypted context.

Can my AI email assistant share my email data with third parties?

Most AI email tools route content through third-party LLM APIs, making those providers sub-processors under GDPR Article 28. The vendor must hold a DPA and Zero Data Retention agreements with each sub-processor. Before adopting any AI email tool, request the full sub-processor list and confirm your data is not used for model training.